Module 7: Analytics Data Models

Generating Enriched Entities

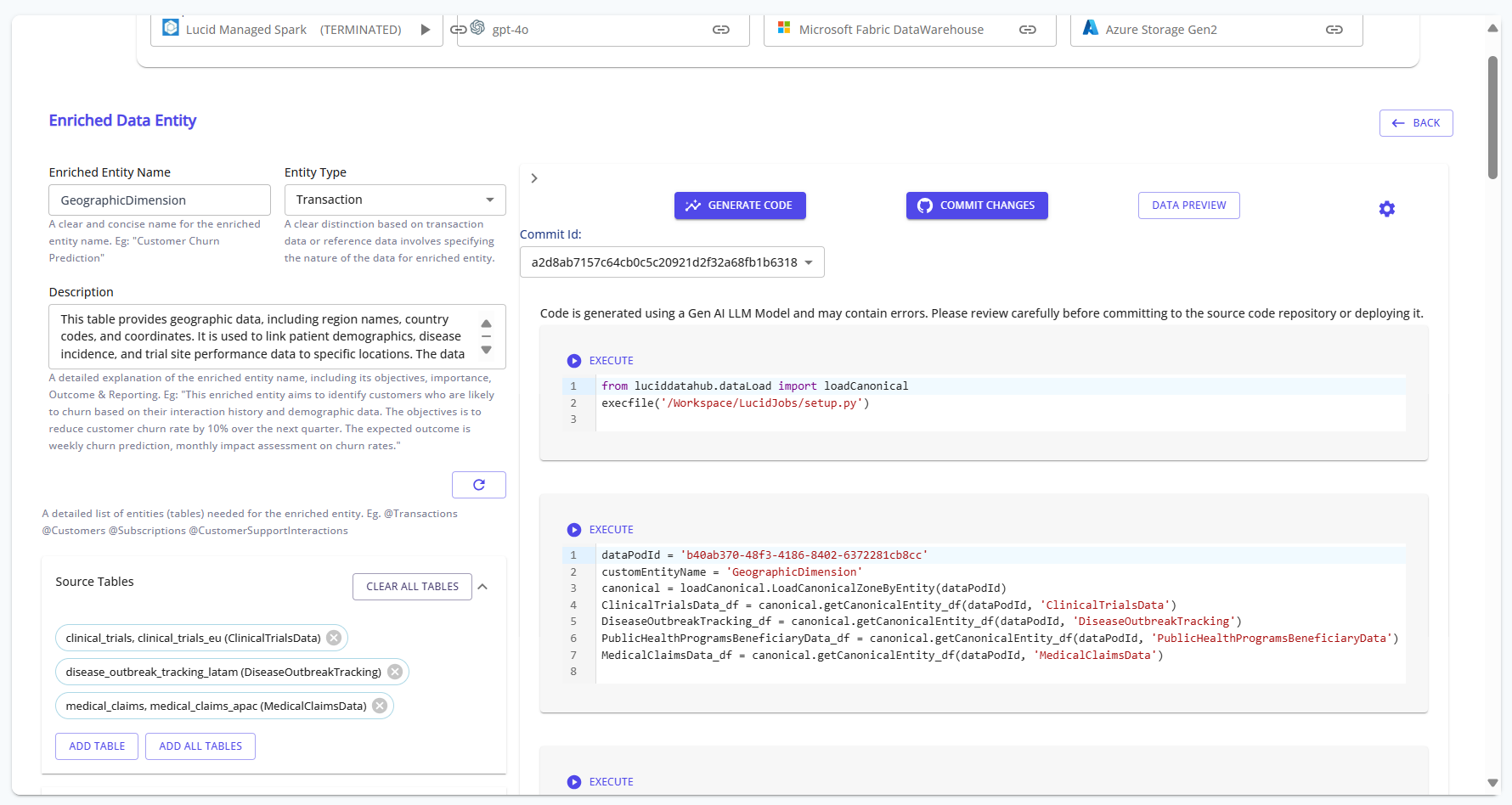

This module guides you through creating enriched entities using the Lucid Data Hub platform. Enriched entities are designed to combine and transform data from multiple sources for advanced reporting and analytics.

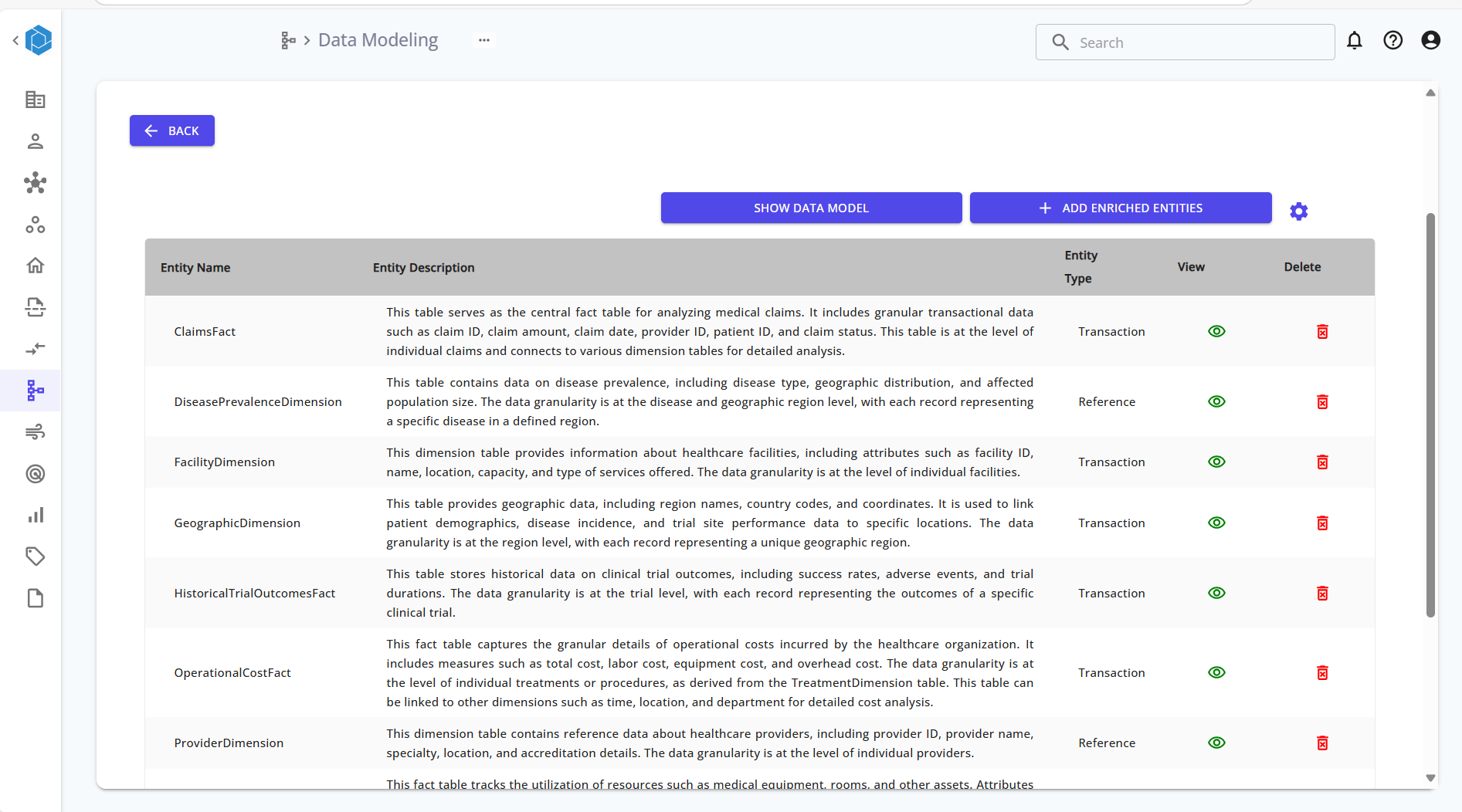

Step 1: Open the Enriched Data Modeling Section

From the left sidebar, click on the Data Modeling icon. You will see a list of available entities, including fact and dimension tables.

Reference:

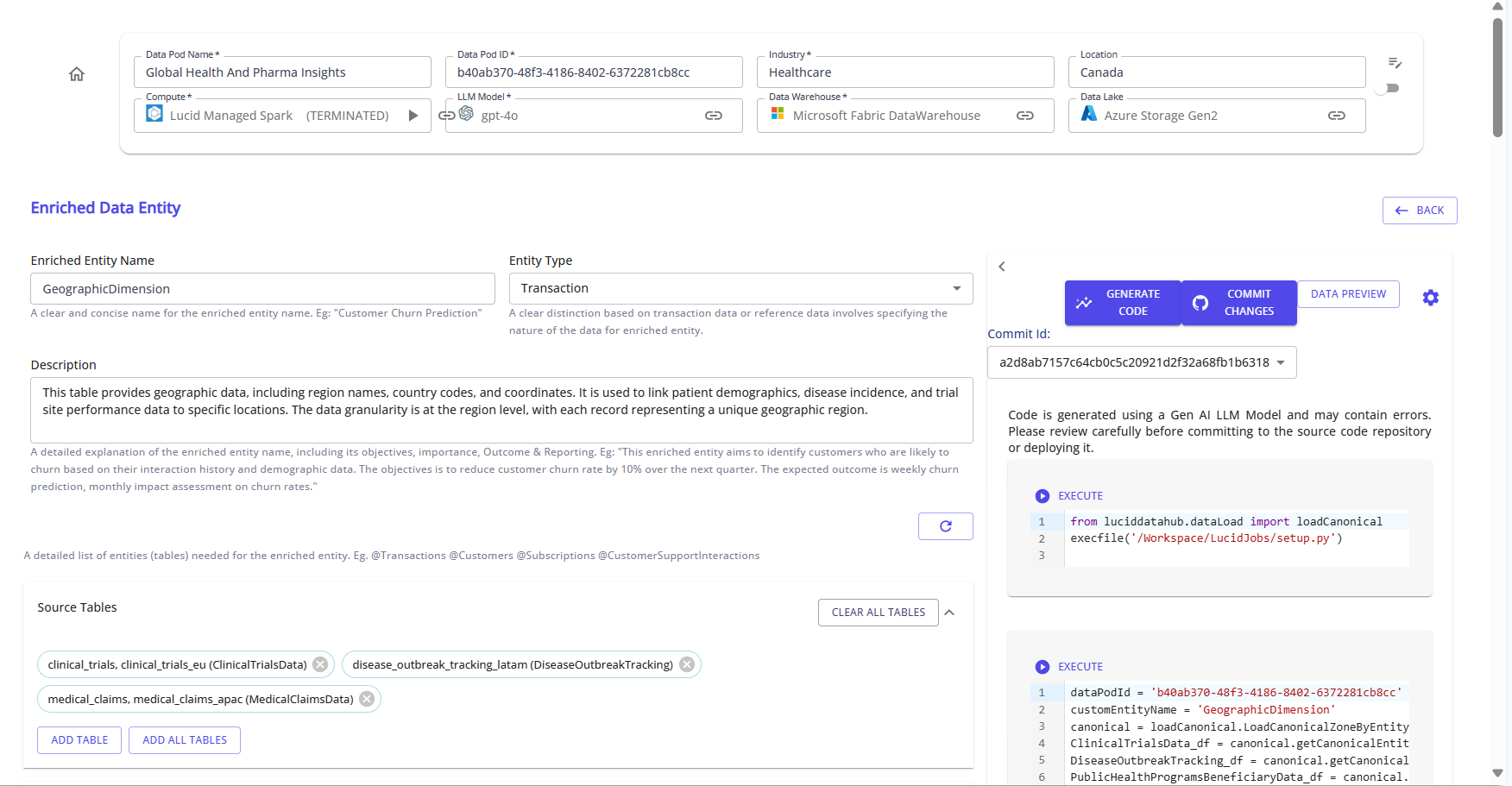

Step 2: Select or Create an Enriched Entity

Click on "Add Enriched Entities" to create a new enriched entity, or select an existing one from the list.

Provide the following:

- Enriched Entity Name

- Entity Type (e.g., Transaction or Reference)

- Description explaining the purpose, granularity, and usage of the entity.

Review the Suggested Entities. You can also add or drop suggested entities, or even regenerate the entire suggestion list, by clicking on the refresh icon.

Reference:

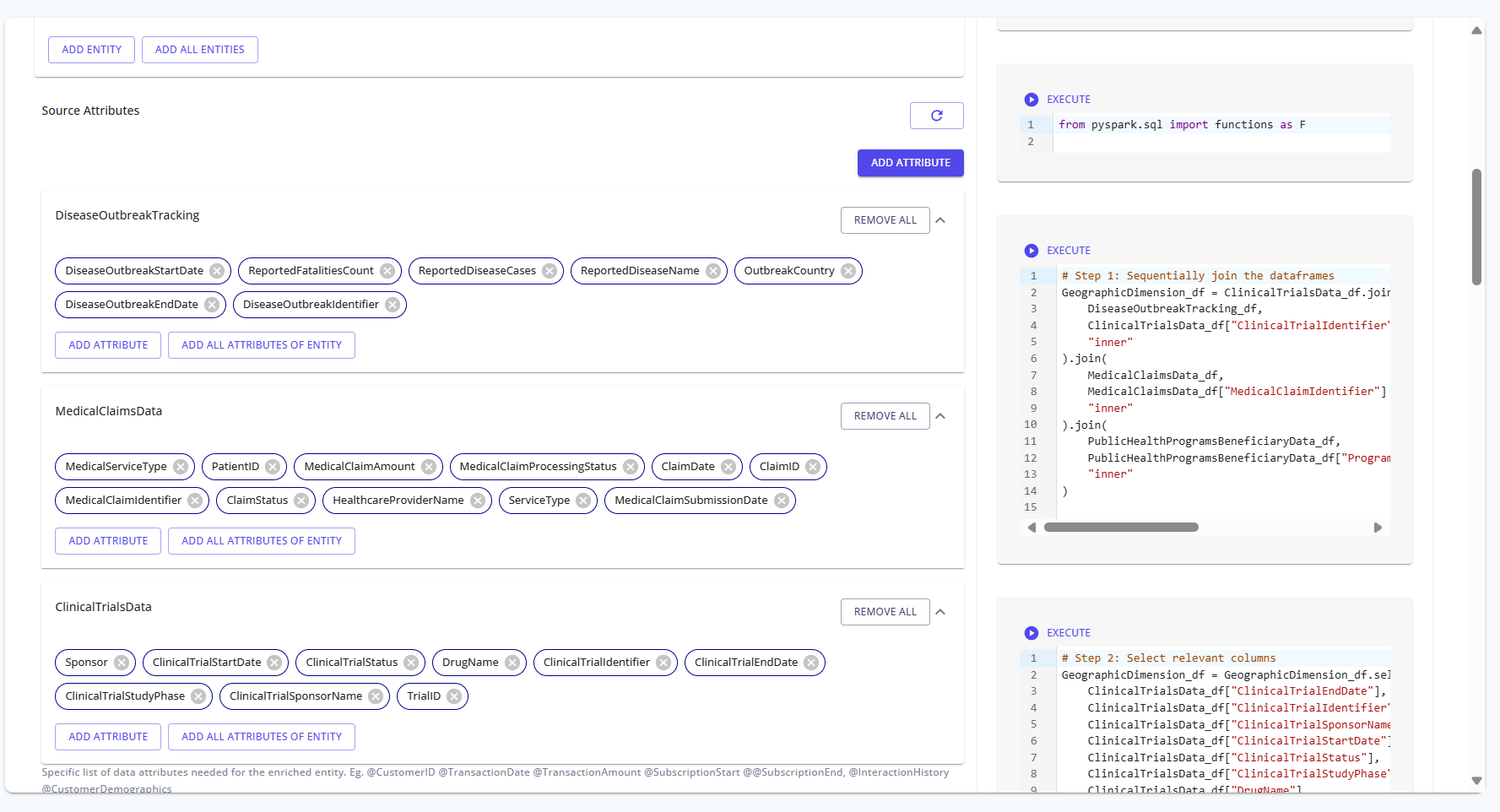

Step 3: Add Source Attributes

For each source, click Add All Attributes of Entity to bring in required columns.

Reference:

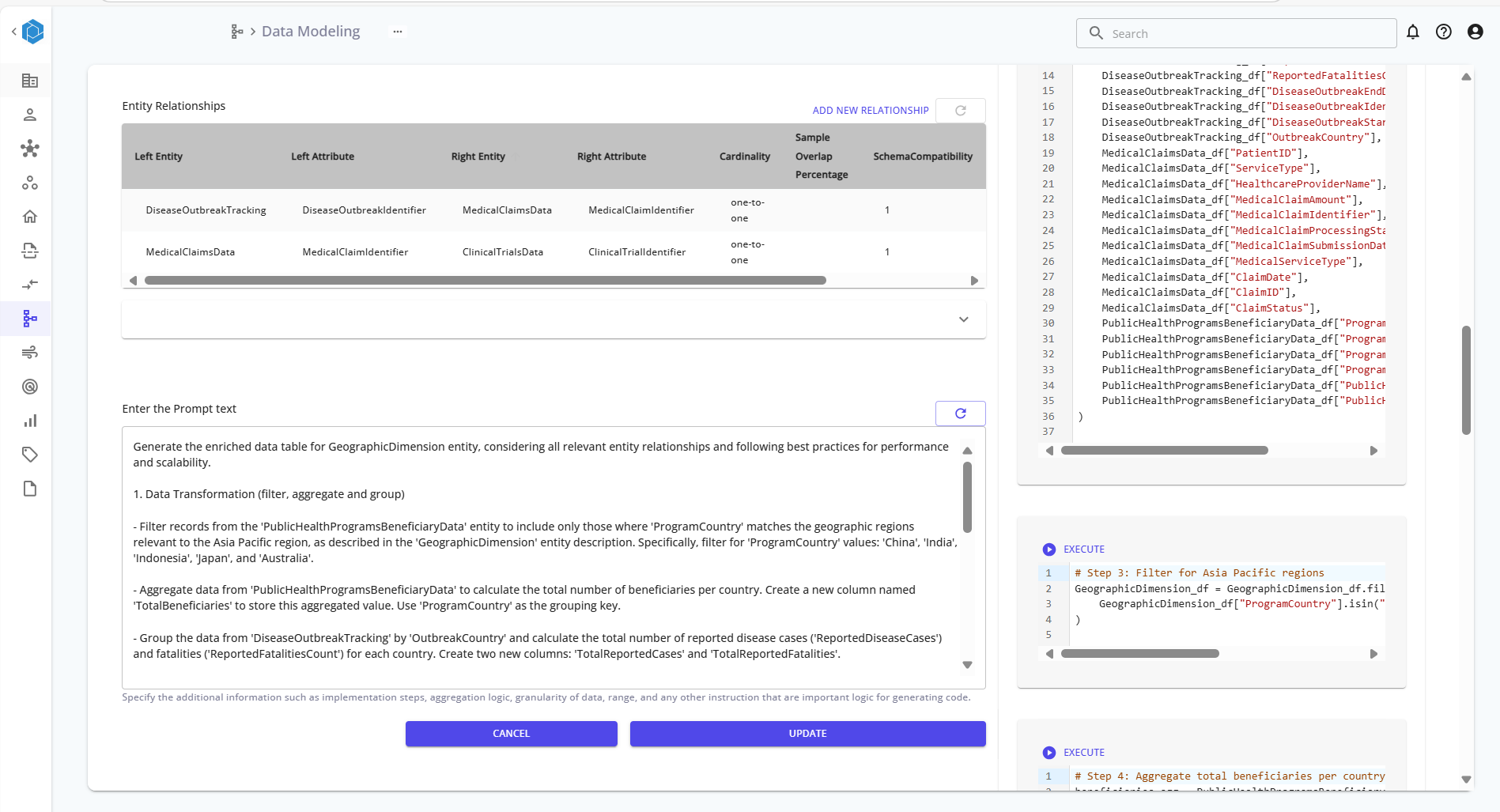

Step 4: Generate Relationships and Prompt

Define relationships between entities by linking key attributes. Once done, review or generate the transformation prompt that describes:

- Filters

- Aggregations

- Grouping logic

The prompt guides the platform in generating the correct transformation logic.

Reference:

Step 5: Generate Code and Execute

Click Generate Code to produce transformation logic using PySpark. You can edit the code before committing. After reviewing, click Commit Changes, then Execute to run the transformation and save the enriched entity.

Reference:

Summary of Steps

| Step | Action |

|---|---|

| 1 | Open Data Modeling section |

| 2 | Create or select an enriched entity |

| 3 | Add source tables and attributes |

| 4 | Generate relationships and transformation prompt |

| 5 | Generate, commit, and execute code |

Enriched entities enable powerful analytical models by combining multiple canonical and transactional tables, applying transformations, and materializing the result in your data lake or warehouse.